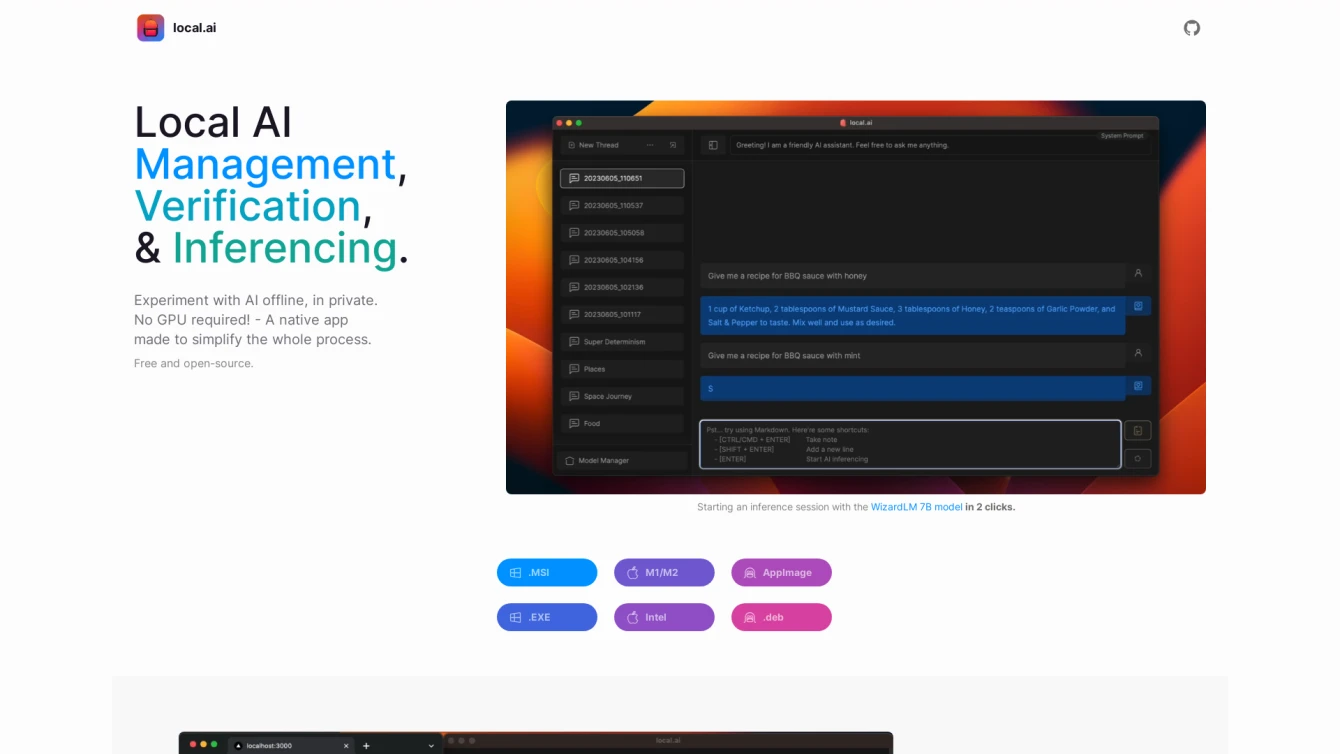

Streamlining local AI experimentation, model management, and inferencing.

-

Added on July 26, 2023

-

Updated on September 18, 2023

-

1 views (powered by Overtracking Analytics)

Product information and features

Localai is an AI tool designed to streamline your interactions with AI models in a local setting. It's a native app that enables you to conduct AI experiments without any complex technical setup, eliminating the need for specialized hardware like a GPU.

Localai is a free, open-source software with an efficient Rust backend. It's a compact tool, weighing in at less than 10MB on platforms such as Mac M2, Windows, and Linux. Localai is memory-efficient, making it perfect for various computing environments.

This AI tool offers CPU inferencing capabilities, seamlessly adapting to the available threads. It even supports GGML quantization, providing options for q4, 5.1, 8, and f16. But the features don't stop there. Localai also includes model management capabilities, allowing you to manage your AI models from one centralized location.

It provides resumable, concurrent model downloading, and usage-based sorting, all while remaining agnostic to the directory structure. To ensure the integrity of your downloaded models, Localai offers a robust digest verification feature powered by the BLAKE3 and SHA256 algorithms.

Additionally, Localai includes an inferencing server feature. This allows you to initiate a local streaming server for AI inferencing in just a couple of clicks. It provides a quick inference UI, supports writing to .mdx files, and includes options for inference parameters and remote vocabulary.

In summary, Localai is a comprehensive, user-friendly tool for local AI experimentation, model management, and inferencing. It provides a range of features perfect for experimenting with AI models, including model management, digest verification, and an inferencing server. This tool can effectively streamline your AI experimentation process, making it a valuable addition to your software toolbox.

Tell the world Localai has been featured on NaNAI.tools:

Localai Reviews

What's your experience with Localai?

0 global ratings

There are no reviews yet.